As our Virtual Reality (VR) training project with First Commonwealth Credit Union was wrapping up, I found myself reflecting on the kind of product we’ve been building over the past couple of years. As a product design lead, this project pushed me to revisit a fundamental question: what are we actually designing?

VR Training: Rethinking What “Immersion” Really Means

For our VR Training Academy, at a high level, we’re building Artificial Intelligence (AI)-powered VR training experiences where frontline staff can practice real-world conversations in a safe, repeatable environment. The goal is simple: help people build confidence and shift toward more member-centric, consultative interactions. But when I look back at a similar project we did two years ago, I realize how much our understanding of that goal has evolved.

In 2024, we partnered with Ent Credit Union (now Wings) to simulate member-facing conversations in VR. The experience was structured, guided, and predictable. We designed clear flows, defined interaction paths, and a conversational agent that behaved in expected ways. From a UX perspective, it worked. It gave us a solid foundation and shaped our early definition of what “immersive training” could be.

But through our work with First Commonwealth, it became clear that we were no longer solving the same problem. Previously, we were designing experiences that users could complete. But in 2026, we were responsible for designing conversations that users could actively participate in and shape. The system is no longer fully predictable, and that’s intentional.

Immersion is no longer about space. It’s about cognition.

True immersion isn’t just about placing users in a virtual environment. It’s about whether they start thinking, responding, hesitating, and making decisions the way they would in real life.

From Scripted to Generative: A Shift in What We Design

As our understanding of immersion evolved, so did our design. The biggest shift in this project wasn’t visual fidelity or hardware. It was the design object itself, and how users interacted with the system.

Previously, we designed pages, flows, and interaction paths. It was a system we could control and validate. But with First Commonwealth, we were now designing systematic conversational, one that is dynamic, open-ended, and inherently unpredictable.

At Ent, a type of highly scripted conversation was delivered through Bob, a prototypical member that reflected the background, physicality and even personality of an Ent member.

This AI-driven member avatar was evolved for First Commonwealth with Maya, whose motivations and context were informed by the kind of member persona First Commonwealth wanted to most engage with in this initial pilot. From Bob to Maya, we moved from a scripted experience to a generative conversation system. AI was no longer executing logic, it was participating in dialogue.

Now, our system interprets tone, intent, emotional cues, and even missing information. We are no longer designing a path for users to follow. We are designing a system users can actively shape through interaction.

At the same time, interaction itself has evolved. A more natural communication environment has been created by threading together voice interfaces, hand gesture interactions, and adaptive UI which responds to conversation context.

In this environment, users are no longer “operating a system”. They are participating in a conversation. Real-time transcripts, captions, and natural pauses, elements we might have once considered friction, now contribute to authenticity. Because real conversations are not perfectly smooth, and we are training for reality.

People pause, hesitate, rephrase, and think out loud. As the system begins to accommodate these imperfections, the design goal shifts from helping users complete tasks efficiently to helping them express themselves more authentically.

From Completion to Behaviour: Rethinking Feedback

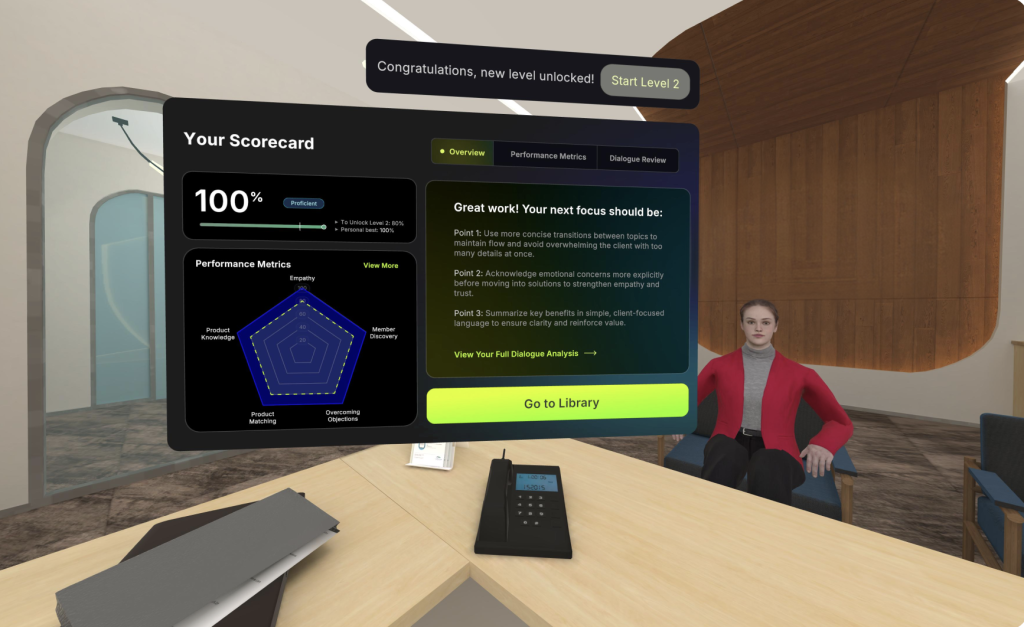

Another major shift came from how we think about feedback. Instead of measuring whether or not a user completed a full interaction, and how long it took them to get there, we’re now trying to understand how they communicate: did they demonstrate empathy, uncover real needs, make appropriate recommendations, and respond effectively under uncertainty?

In other words, ‘training’ transformed from “Did you do it right?” to “How did you do it?”

This is reflected in the design of a behaviour-driven Personalized Scorecard. Rather than just showing results, it visualizes how users perform during these interactions. Then, it maps those behaviours to business-critical metrics like empathy, discovery, product alignment, and objection handling. This shifts coaching from subjective feedback to tangible and discussable areas of development.

From a human-centered perspective, this is significant. We’re taking behaviours that were previously hard to articulate and making them visible, understandable, and improvable.

But it also introduces a new layer of complexity: Does the system truly understand user intent?

One user pointed out that even saying “I understand” in a sarcastic or disengaged tone could still result in a high empathy score. This highlights a subtle but important boundary:

Keywords are not the same as behaviour.

And this leads to a deeper realization:

Designing AI systems is not just about testing behaviour. It’s about designing the rules that define behaviour.

If traditional UX focused on usability, AI-driven systems require us to design how the system interprets users, how it makes decisions, and whether those decisions are explainable and trustworthy.

Because once we start measuring behaviour, accuracy becomes more than a technical issue. It directly affects whether users trust the system and whether they are willing to change based on its feedback.

In that sense, the Scorecard is not just an evaluation tool. It’s part of a learning system that helps users see how they interact with others and adjust over time.

From Collaboration to Experience: Designing With, Not For

Another noticeable shift in this project was how we worked. We entered the problem space earlier, using workshops and sprint reviews to deeply understand the business context, and continuously incorporated feedback from Member Experience Associates (MXAs) we dubbed ‘Champions’. These Champions were users across different branches that aimed to push our solution to the limit weeks before we ever stepped foot into a First Commonwealth branch or officially deployed the training.

This allowed the design to move beyond responding to requirements and instead be shaped by real usage scenarios.

The problem First Commonwealth was solving isn’t just about improving sales training. It was about helping MXAs transition from transactional order-takers to confident, consultative communicators.

Champions played a critical role here. They weren’t just users, they were interpreters, validators, and amplifiers of real-world behaviours. Much of what we learned about effective conversations didn’t come from design documentation, but from how they interacted with the system.

This makes the boundary of UX clearer. We are not just designing for users. We are designing with them.

And often, the most meaningful signals don’t come from features, but from small moments.

Someone said, “If you say the wrong thing, nobody’s going to yell at you.” Another asked immediately, “Can I try again?” Others mentioned that the experience made selling feel more like helping.

These are simple reactions, but they point to something fundamental:

This is a truly safe-to-fail environment.

And what that enables is not correctness, but growth.

Learning doesn’t happen when people are being evaluated. It happens when people feel safe enough to try, fail, and try again.

Compared to traditional role play, VR creates a more private and low-pressure environment. Users can practice repeatedly without being observed, gradually building confidence and developing their own communication style.

It’s within these small behavioural shifts that we start to see where design truly makes an impact.

From Complexity to Scale: What Are We Really Designing?

As the system becomes more generative and open-ended, we’re starting to see new signals. These “issues” aren’t necessarily problems, they’re often the product revealing its next layer of design opportunity.

As the system approaches real-world complexity, it also gains the potential to create real-world impact.

Champions asked for more pushback in conversations. They wanted resistance, not smooth progression. Others noted that the experience still felt guided rather than uncertain.

These aren’t complaints. They’re expectations.

They signal that we’ve moved from making the experience work to making it real.

And the goal of training shifts accordingly: from mastering paths to making decisions under uncertainty.

In reality, conversations are not linear. They involve ambiguity, emotion, and constant adjustment. What matters isn’t whether someone follows a script, but whether they can make good judgments.

This also reveals a clear direction for product design. Beginners benefit from structure. High performers need complexity.

Different users require different levels of uncertainty.

Looking ahead, the opportunity is not just to make training more complex, but to make it more nuanced, more human, and more reflective of real-world ambiguity.

Which leads to a bigger question: Can this system actually change behaviour over time?

We will soon find that out ourselves with First Commonwealth’s two-month pilot across 3 branches, tens of MXAs and hundreds of hours of training. The two-month pilot is not just about usability. It’s about whether the product can integrate into real workflows, be used consistently, and influence actual performance.

Because ultimately, a product’s effectiveness is not defined by its design, but by its adoption. It needs to be understood, trusted, and used repeatedly until it becomes part of everyday work.

If that happens, the impact goes beyond individual improvement. It starts shaping how an organization defines a “good conversation”.

That is the real transition: from usable, to scalable.

Final Reflection

Looking back from Ent to First Commonwealth, what stands out to me is not just that the product became more advanced, but that our understanding of learning has fundamentally shifted.

We’ve moved from designing for flow, correctness, and completion to designing for behaviour, confidence, and decision-making under uncertainty.

As designers, our role is evolving as well. We are no longer just designing interfaces or flows. We are designing systems that allow people to practice, adapt, and gradually form their own judgment.

In the end, we’re not just building a VR × AI training product.

We’re designing how people communicate, build trust, and make decisions in the real world.

And perhaps that’s what we’ve really been designing all along.

Ready to move beyond traditional training?

At Aequilibrium, we don’t just build immersive environments; we design the behavioral “muscle memory” that helps your team lead with conviction. Whether you are looking to shift your culture from “order-taking” to consultative excellence or want to provide a truly safe-to-learn space for your staff to fail and grow, we’re here to help you bridge that gap.

Let’s explore what’s possible for your organization. We are offering free consultations to discuss how AI-powered VR training can be tailored to your specific business goals—mapping behavioral data directly to your real-world KPIs